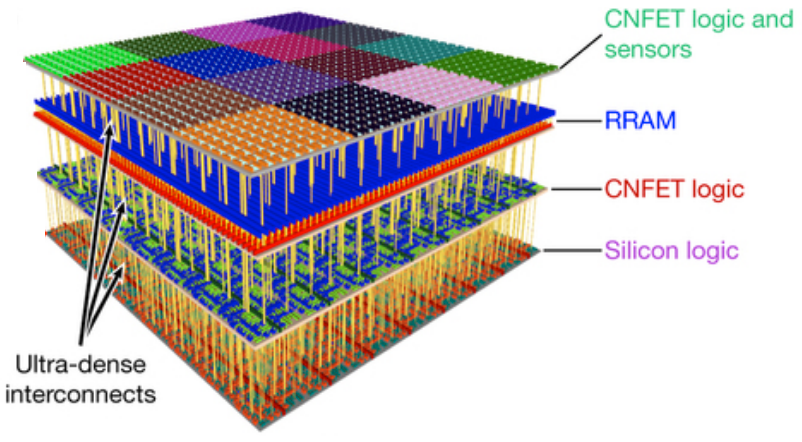

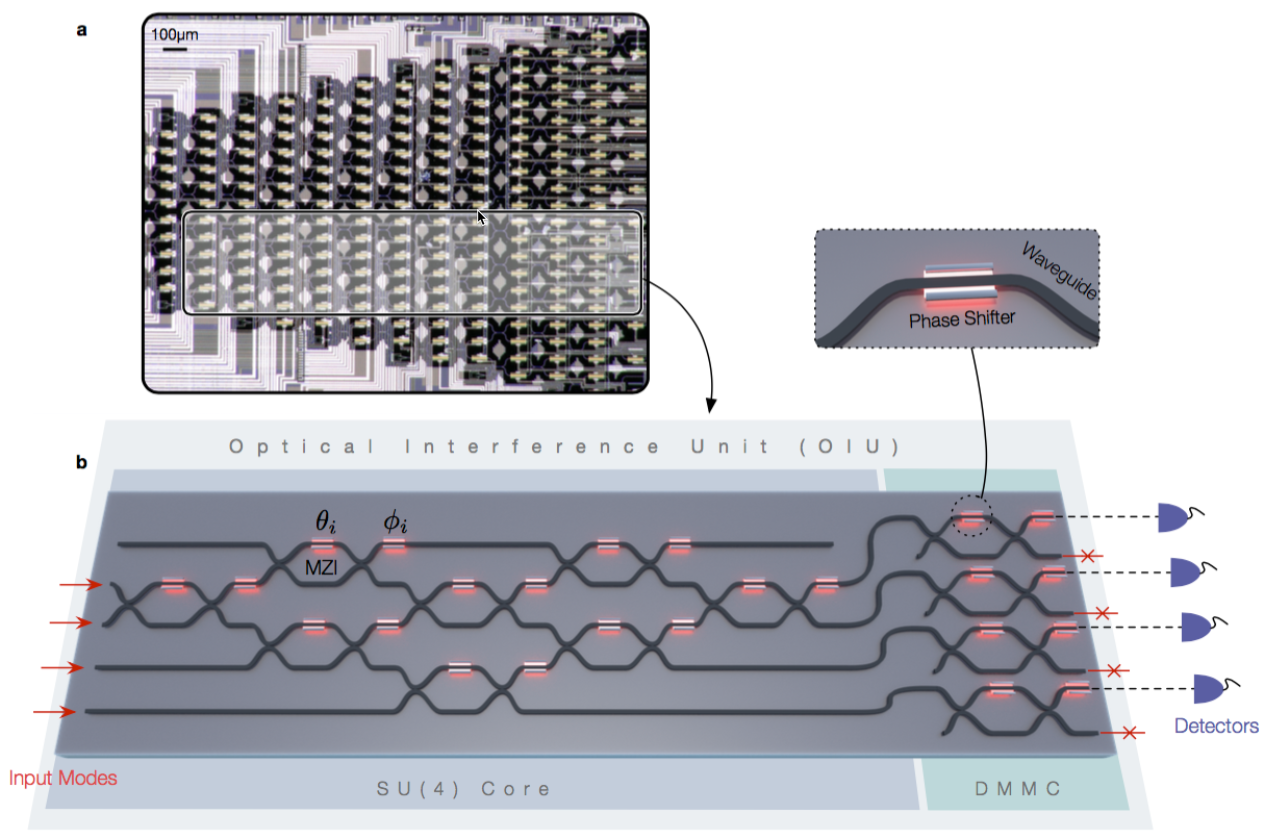

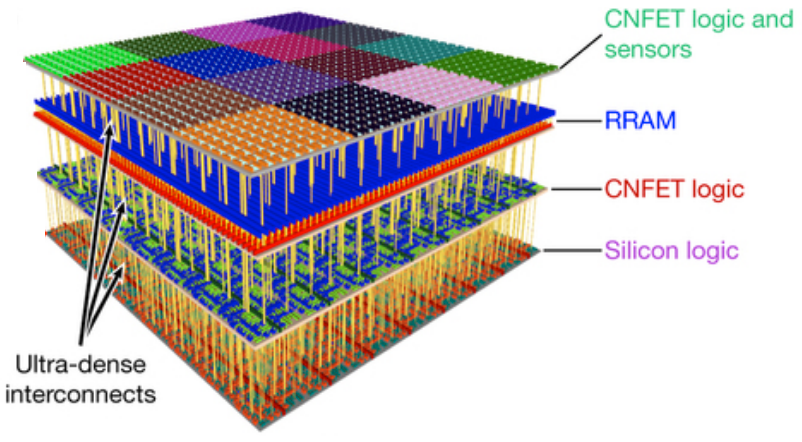

Four vertical layers in new 3D nanosystem chip. Top (fourth layer): sensors and more than one million carbon-nanotube field-effect transistor (CNFET) logic inverters; third layer, on-chip non-volatile RRAM (1 Mbit memory); second layer, CNFET logic with classification accelerator (to identify sensor inputs); first (bottom) layer, silicon FET logic. (credit: Max M. Shulaker et al./Nature)

A radical new 3D chip that combines computation and data storage in vertically stacked layers — allowing for processing and storing massive amounts of data at high speed in future transformative nanosystems — has been designed by researchers at Stanford University and MIT.

The new 3D-chip design* replaces silicon with carbon nanotubes (sheets of 2-D graphene formed into nanocylinders) and integrates resistive random-access memory (RRAM) cells.

Carbon-nanotube field-effect transistors (CNFETs) are an emerging transistor technology that can scale beyond the limits of silicon MOSFETs (conventional chips), and promise an order-of-magnitude improvement in energy-efficient computation. However, experimental demonstrations of CNFETs so far have been small-scale and limited to integrating only tens or hundreds of devices (see earlier 2015 Stanford research, “Skyscraper-style carbon-nanotube chip design…”).

The researchers integrated more than 1 million RRAM cells and 2 million carbon-nanotube field-effect transistors in the chip, making it the most complex nanoelectronic system ever made with emerging nanotechnologies, according to the researchers. RRAM is an emerging memory technology that promises high-capacity, non-volatile data storage, with improved speed, energy efficiency, and density, compared to dynamic random-access memory (DRAM).

Instead of requiring separate components, the RRAM cells and carbon nanotubes are built vertically over one another, creating a dense new 3D computer architecture** with interleaving layers of logic and memory. By using ultradense through-chip vias (electrical interconnecting wires passing between layers), the high delay with conventional wiring between computer components is eliminated.

The new 3D nanosystem can capture massive amounts of data every second, store it directly on-chip, perform in situ processing of the captured data, and produce “highly processed” information. “Such complex nanoelectronic systems will be essential for future high-performance, highly energy-efficient electronic systems,” the researchers say.

How to combine computation and storage

Illustration of separate CPU (bottom) and RAM memory (top) in current computer architecture (images credit: iStock)

The new chip design aims to replace current chip designs, which separate computing and data storage, resulting in limited-speed connections.

Separate 2D chips have been required because “building conventional silicon transistors involves extremely high temperatures of over 1,000 degrees Celsius,” explains lead author Max Shulaker, an assistant professor of electrical engineering and computer science at MIT and lead author of a paper published July 5, 2017 in the journal Nature. “If you then build a second layer of silicon circuits on top, that high temperature will damage the bottom layer of circuits.”

Instead, carbon nanotube circuits and RRAM memory can be fabricated at much lower temperatures: below 200 C. “This means they can be built up in layers without harming the circuits beneath,” says Shulaker.

Overcoming communication and computing bottlenecks

As applications analyze increasingly massive volumes of data, the limited rate at which data can be moved between different chips is creating a critical communication “bottleneck.” And with limited real estate on increasingly miniaturized chips, there is not enough room to place chips side-by-side.

At the same time, embedded intelligence in areas ranging from autonomous driving to personalized medicine is now generating huge amounts of data, but silicon transistors are no longer improving at the historic rate that they have for decades.

Instead, three-dimensional integration is the most promising approach to continue the technology-scaling path set forth by Moore’s law, allowing an increasing number of devices to be integrated per unit volume, according to Jan Rabaey, a professor of electrical engineering and computer science at the University of California at Berkeley, who was not involved in the research.

Three-dimensional integration “leads to a fundamentally different perspective on computing architectures, enabling an intimate interweaving of memory and logic,” he says. “These structures may be particularly suited for alternative learning-based computational paradigms such as brain-inspired systems and deep neural nets, and the approach presented by the authors is definitely a great first step in that direction.”

The new 3D design provides several benefits for future computing systems, including:

- Logic circuits made from carbon nanotubes can be an order of magnitude more energy-efficient compared to today’s logic made from silicon.

- RRAM memory is denser, faster, and more energy-efficient compared to conventional DRAM (dynamic random-access memory) devices.

- The dense through-chip vias (wires) can enable vertical connectivity that is 1,000 times more dense than conventional packaging and chip-stacking solutions allow, which greatly improves the data communication bandwidth between vertically stacked functional layers. For example, each sensor in the top layer can connect directly to its respective underlying memory cell with an inter-layer via. This enables the sensors to write their data in parallel directly into memory and at high speed.

- The design is compatible in both fabrication and design with today’s CMOS silicon infrastructure.

Shulaker next plans to work with Massachusetts-based semiconductor company Analog Devices to develop new versions of the system.

This work was funded by the Defense Advanced Research Projects Agency, the National Science Foundation, Semiconductor Research Corporation, STARnet SONIC, and member companies of the Stanford SystemX Alliance.

* As a working-prototype demonstration of the potential of the technology, the researchers took advantage of the ability of carbon nanotubes to also act as sensors. On the top layer of the chip, they placed more than 1 million carbon nanotube-based sensors, which they used to detect and classify ambient gases for detecting signs of disease by sensing particular compounds in a patient’s breath, says Shulaker. By layering sensing, data storage, and computing, the chip was able to measure each of the sensors in parallel, and then write directly into its memory, generating huge bandwidth in just one device, according to Shulaker. The top layer could be replaced with additional computation or data storage subsystems, or with other forms of input/output, he explains.

** Previous R&D in 3D chip technologies and their limitations are covered here, noting that “in general, 3D integration is a broad term that includes such technologies as 3D wafer-level packaging (3DWLP); 2.5D and 3D interposer-based integration; 3D stacked ICs (3D-SICs), monolithic 3D ICs; 3D heterogeneous integration; and 3D systems integration.” The new Stanford-MIT nanosystem design significantly expands this definition.

Abstract of Three-dimensional integration of nanotechnologies for computing and data storage on a single chip

The computing demands of future data-intensive applications will greatly exceed the capabilities of current electronics, and are unlikely to be met by isolated improvements in transistors, data storage technologies or integrated circuit architectures alone. Instead, transformative nanosystems, which use new nanotechnologies to simultaneously realize improved devices and new integrated circuit architectures, are required. Here we present a prototype of such a transformative nanosystem. It consists of more than one million resistive random-access memory cells and more than two million carbon-nanotube field-effect transistors—promising new nanotechnologies for use in energy-efficient digital logic circuits and for dense data storage—fabricated on vertically stacked layers in a single chip. Unlike conventional integrated circuit architectures, the layered fabrication realizes a three-dimensional integrated circuit architecture with fine-grained and dense vertical connectivity between layers of computing, data storage, and input and output (in this instance, sensing). As a result, our nanosystem can capture massive amounts of data every second, store it directly on-chip, perform in situ processing of the captured data, and produce ‘highly processed’ information. As a working prototype, our nanosystem senses and classifies ambient gases. Furthermore, because the layers are fabricated on top of silicon logic circuitry, our nanosystem is compatible with existing infrastructure for silicon-based technologies. Such complex nano-electronic systems will be essential for future high-performance and highly energy-efficient electronic systems.