Wearable device for racehorses could help prevent fatal injuries

This Is the Only Way to Solve the Three-Body Problem

Magnets could pull oil out of ocean before wildlife is harmed

Race to save hidden treasures under threat from climate change

Hot Tips For the Next Disruptive Blood Testing Unicorn

A Rocket Launch Brings China One Step Closer to Its Own GPS

Two inventions deal with virtual-reality sickness

Single-eye view of a virtual environment before (left) and after (right) a dynamic field-of-view modification that subtly restricts the size of the image during image motion to reduce motion sickness (credit: Ajoy Fernandes and Steve Feiner/Columbia Engineering)

Columbia Engineering researchers announced earlier this week that they have developed a simple way to reduce VR motion sickness that can be applied to existing consumer VR devices, such as Oculus Rift, HTC Vive, Sony PlayStation VR, Gear VR, and Google Cardboard devices.

The trick is to subtly change the field of view (FOV), or how much of an image you can see, during visually perceived motion. In an experiment conducted by Computer Science Professor Steven K. Feiner and student Ajoy Fernandes, most of the participants were not even aware of the intervention.

What causes VR sickness is the clash between the visual motion cues that users see and the physical motion cues that they receive from their inner ears’ vestibular system, which provide our sense of motion, equilibrium, and spatial orientation. When the visual and vestibular cues conflict, users can feel quite uncomfortable, even nauseated.

Decreasing the field of view can decrease these symptoms, but can also decrease the user’s sense of presence (reality) in the virtual environment, making the experience less compelling. So the researchers worked on subtly decreasing FOV in situations when a larger FOV would be likely to cause VR sickness (when the mismatch between physical and virtual motion increases) and restoring the FOV when VR sickness is less likely to occur (when the mismatch decreases).

Columbia University | Combating VR Sickness through Subtle Dynamic Field-Of-View Modification

They developed software that functions as a pair of “dynamic FOV restrictors” that can partially obscure each eye’s view with a virtual soft-edged cutout. They then determined how much the user’s field of view should be reduced, and the speed with which it should be reduced and then restored, and tested the system in an experiment.

Most of the experiment participants who used the restrictors did not notice them, and all those who did notice them said they would prefer to have them in future VR experiences.

The study was presented at IEEE 3DUI 2016 (IEEE Symposium on 3D User Interfaces) on March 20, where it won the Best Paper Award.

Galvanic Vestibular Stimulation

A different, more ambitious approach was announced in March by vMocion, LLC, an entertainment technology company, based on the Mayo Clinic‘s patented Galvanic Vestibular Stimulation (GVS) technology*, which electrically stimulates the vestibular system. vMocion’s new 3v Platform (virtual, vestibular and visual) was actually developed to add a “magical” sensation of motion in existing gaming, movies, amusement parks and other entertainment environments.

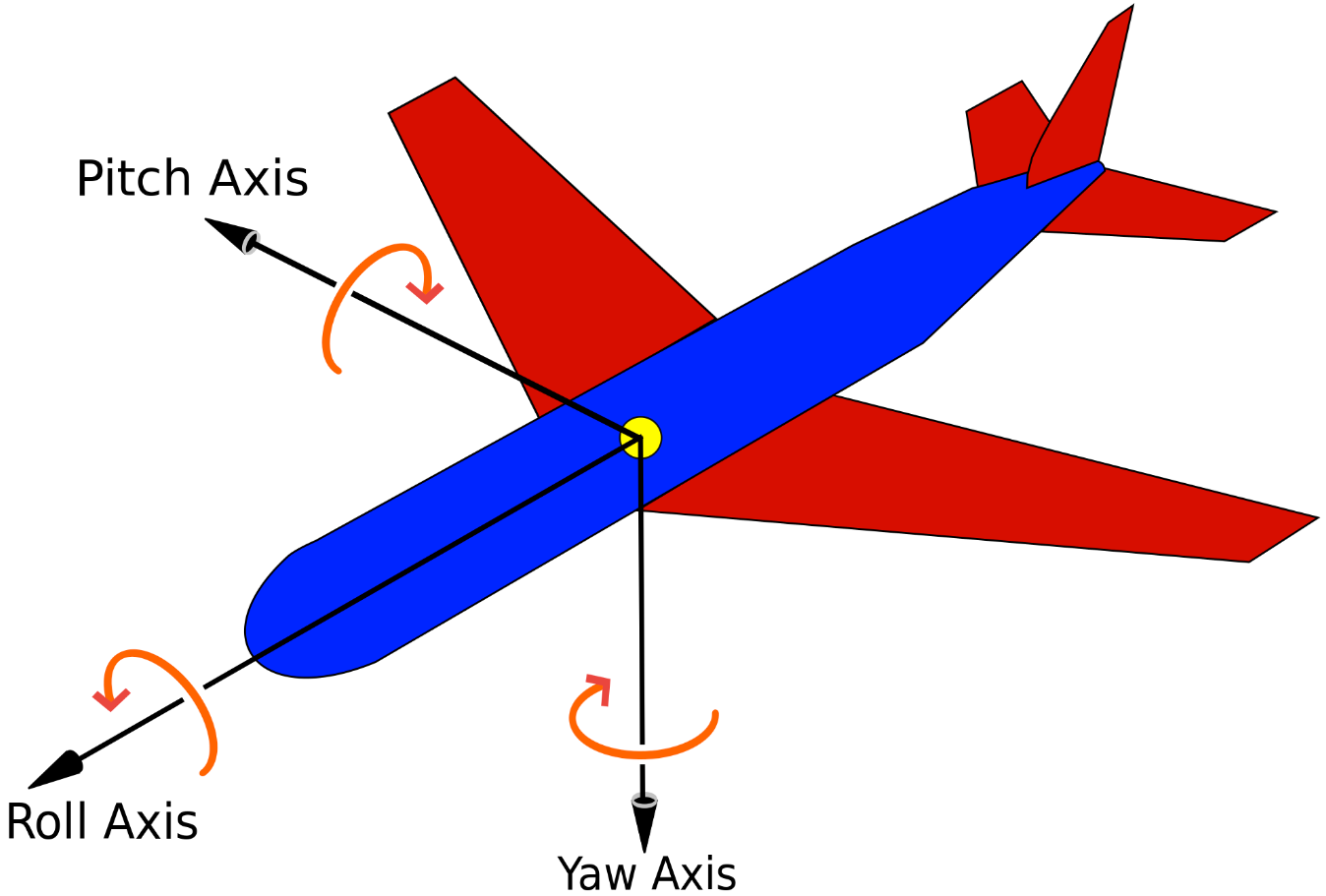

The 3v system can generate roll, pitch, and yaw sensations (credit: Wikipedia)

But it turns out GVS also works to reduce VR motion sickness. vMocion says it will license the 3v Platform to VR and other media and entertainment companies. The system’s software that can be integrated into existing operating systems, and added to existing devices such as head-mounted devices — along with smartphones, 3-D glasses and TVs, says Bradley Hillstrom Jr., CEO of vMocion.

vMocion | Animation of Mayo Clinic’s Galvanic Vestibular Stimulation (GVS) Technology

Integrating into VR headsets

“vMocion is are already in talks with companies in the gaming and entertainment industries,” Hillstrom told KurzweilAI, “and we hope to work with systems integrators and other strategic partners who can bring this technology directly to consumers very soon.” Hillstrom said the technology can be integrated into existing headsets and other devices.

Samsung has announced plans to sell a system using GVS, called Entrim 4D, although it’s not clear from the video (showing a Gear VR device) how it connects to the front and rear electrodes (apparently needed for pitch sensations).

Samsung | Entrim 4D

Mayo Clinic | The Story Behind Mayo Clinic’s GVS Technology & vMocion’s 3v Platform

* The technology grew out of decade-long medical research by Mayo Clinic’s Aerospace Medicine and Vestibular Research Laboratory (AMVRL) team, which consists of experts in aerospace medicine, internal medicine and computational science, as well as neurovestibular specialists, in collaboration with Vivonics, Inc., a biomedical engineering company. The technology is based on work supported by the grants from U.S. Army and U.S. Navy.

Abstract of Combating VR sickness through subtle dynamic field-of-view modification

Virtual Reality (VR) sickness can cause intense discomfort, shorten the duration of a VR experience, and create an aversion to further use of VR. High-quality tracking systems can minimize the mismatch between a user’s visual perception of the virtual environment (VE) and the response of their vestibular system, diminishing VR sickness for moving users. However, this does not help users who do not or cannot move physically the way they move virtually, because of preference or physical limitations such as a disability. It has been noted that decreasing field of view (FOV) tends to decrease VR sickness, though at the expense of sense of presence. To address this tradeoff, we explore the effect of dynamically, yet subtly, changing a physically stationary person’s FOV in response to visually perceived motion as they virtually traverse a VE. We report the results of a two-session, multi-day study with 30 participants. Each participant was seated in a stationary chair, wearing a stereoscopic head-worn display, and used control and FOV-modifying conditions in the same VE. Our data suggests that by strategically and automatically manipulating FOV during a VR session, we can reduce the degree of VR sickness perceived by participants and help them adapt to VR, without decreasing their subjective level of presence, and minimizing their awareness of the intervention.

A low-cost ‘electronic nose’ spectrometer for home health diagnosis

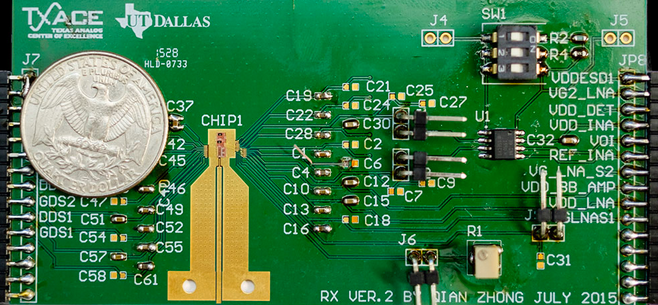

Current experimental design of transmitter radio-frequency front end for a rotational spectrometer. Using integrated circuits (such as the one below “CHIP1”) in an electronic nose promises to make the future device more affordable. (credit: UT Dallas)

UT Dallas researchers have designed an affordable “electronic nose” radio-frequency front end for a rotational spectrometer — used for detecting chemical molecules in human breath for health diagnosis.

Current breath-analysis devices are bulky and too costly for commercial use, said Kenneth O, PhD, a principal investigator of the effort and director of Texas Analog Center of Excellence (TxACE). Instead, the researchers used CMOS integrated circuits technology, which promises to make the device compact and affordable.

A rotational spectrometer generates and transmits electromagnetic waves over a wide range of frequencies, and analyzes how the waves are attenuated (absorbed) to determine what chemicals are present, as well as their concentrations in a sample. The system can detect low levels of chemicals present in human breath.

A breath test contains information about practically every part of a human body, but an electronic nose can detect gas molecules with more specificity and sensitivity than breathalyzers, which can confuse acetone for ethanol (the active ingredient of alcoholic drinks) in the breath, for example. This is important for patients with Type 1 diabetes, who have high concentrations of acetone in their breath.

The current research focuses on the design of a 200–280 GHz transmitter radio-frequency front end.

Future home use predicted

The researchers envision that the CMOS-based device will first be used in industrial settings, and then in doctors’ offices and hospitals. As the technology matures, the devices could be used in homes. Dr. O said the need for blood work and gastrointestinal tests, for example, could be reduced, and diseases could be detected earlier, lowering the costs of health care.

The researchers plan to have a prototype programmable electronic nose available for beta testing in early 2018.

The research is supported by the Semiconductor Research Corporation, Texas Instruments, and Samsung Global Research Outreach. The research team includes members at UT Southwestern, Ohio State University, and Wright State University.

The research was presented Wednesday in an open-access paperat the 2016 IEEE Symposia on VLSI Technology and Circuits in Honolulu, Hawaii.

Here’s What Happens Inside the Mind of a Filibusterer