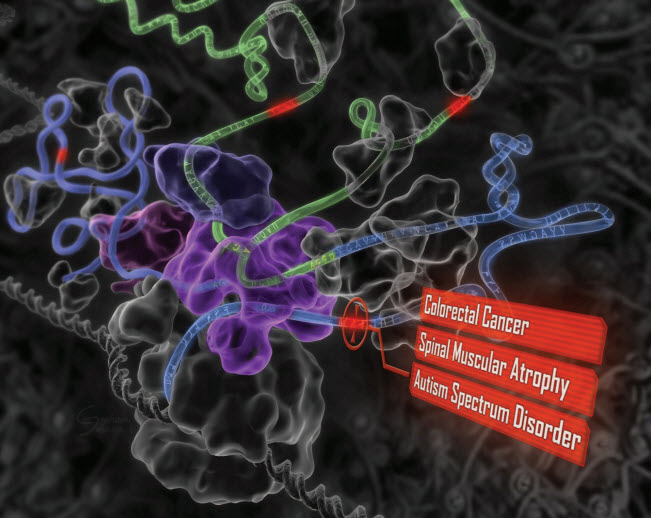

Disruptive potential of environmental exposures to mixtures of chemicals (credit: William H.Goodson III et al./Carcinogenesis)

Common environmental chemicals assumed to be safe at low doses may act separately or together to disrupt human tissues in ways that eventually lead to cancer, according to a task force of almost 200 scientists from 28 countries.

In a nearly three-year investigation of the state of knowledge about environmentally influenced cancers, the scientists studied low-dose effects of 85 common chemicals not considered to be carcinogenic to humans.

Common chemicals

The researchers reviewed the actions of these chemicals against a long list of mechanisms that are important for cancer development. Drawing on hundreds of laboratory studies, large databases of cancer information, and models that predict cancer development, they compared the chemicals’ biological activity patterns to 11 known cancer “hallmarks” – distinctive patterns of cellular and genetic disruption associated with early development of tumors.

The chemicals included bisphenol A (BPA), used in plastic food and beverage containers; rotenone, a broad-spectrum insecticide; paraquat, an agricultural herbicide; and triclosan, an antibacterial agent used in soaps and cosmetics.

In their survey, the researchers learned that 50 of the 85 chemicals had been shown to disrupt functioning of cells in ways that correlated with known early patterns of cancer, even at the low, presumably benign levels at which most people are exposed.

For 13 of them, the researchers found evidence of a dose-response threshold — a level of exposure at which a chemical is considered toxic by regulators. For 22, there was no toxicity information at all.

Synergistic effects over time

“Our findings also suggest these molecules may be acting in synergy to increase cancer activity,” said William Bisson, an assistant professor and cancer researcher at Oregon State University and a team leader on the study. For example, EDTA, a metal-ion-binding compound used in manufacturing and medicine, interferes with the body’s repair of damaged genes.

“EDTA doesn’t cause genetic mutations itself,” said Bisson, “but if you’re exposed to it along with some substance that is mutagenic, it enhances the effect because it disrupts DNA repair, a key layer of cancer defense.”

Bisson said the main purpose of this study was to highlight gaps in knowledge of environmentally influenced cancers and to set forth a research agenda for the next few years. He added that more research is still necessary to assess early exposure and to understand early stages of cancer development.

The study is part of the Halifax Project, sponsored by the Canadian nonprofit organization Getting to Know Cancer. The organization’s mission is to advance scientific knowledge about cancer linked to environmental exposures. The team’s findings are published in an open-access paper in a special issue of the journal Carcinogenesis.

Traditional risk assessment has historically focused on a quest for single chemicals and single modes of action — approaches that may underestimate cancer risk, said Bisson, an expert on computational chemical genomics (the modeling of biochemical molecular interactions in cancer processes). This study takes a different tack, examining the interplay over time of independent molecular processes triggered by low-dose exposures to chemicals.

“Cancer is a disease of diseases,” said Bisson. “It follows multi-step development patterns, and in most cases it has a long latency period. It has to be tackled from an angle that considers the complexity of these patterns.

“A better understanding of what’s driving things to the point where they get uncontrollable will be key for the development of effective strategies for prevention and early detection.”

Abstract of Assessing the carcinogenic potential of low-dose exposures to chemical mixtures in the environment: the challenge ahead

Lifestyle factors are responsible for a considerable portion of cancer incidence worldwide, but credible estimates from the World Health Organization and the International Agency for Research on Cancer (IARC) suggest that the fraction of cancers attributable to toxic environmental exposures is between 7% and 19%. To explore the hypothesis that low-dose exposures to mixtures of chemicals in the environment may be combining to contribute to environmental carcinogenesis, we reviewed 11 hallmark phenotypes of cancer, multiple priority target sites for disruption in each area and prototypical chemical disruptors for all targets, this included dose-response characterizations, evidence of low-dose effects and cross-hallmark effects for all targets and chemicals. In total, 85 examples of chemicals were reviewed for actions on key pathways/mechanisms related to carcinogenesis. Only 15% (13/85) were found to have evidence of a dose-response threshold, whereas 59% (50/85) exerted low-dose effects. No dose-response information was found for the remaining 26% (22/85). Our analysis suggests that the cumulative effects of individual (non-carcinogenic) chemicals acting on different pathways, and a variety of related systems, organs, tissues and cells could plausibly conspire to produce carcinogenic synergies. Additional basic research on carcinogenesis and research focused on low-dose effects of chemical mixtures needs to be rigorously pursued before the merits of this hypothesis can be further advanced. However, the structure of the World Health Organization International Programme on Chemical Safety ‘Mode of Action’ framework should be revisited as it has inherent weaknesses that are not fully aligned with our current understanding of cancer biology.