In vivo 1.3-μm VCSEL SS-OCT imaging of a 12-week-old adult mouse with cranial window preparation. (a) Representative OCT image visualizing morphological details of the cerebral cortex and subsequent brain compartments. (b) OCT brain anatomy showing good correlation with photomicrograph of a Nissl-stained histology section of the same strain mouse brain. (credit: Allen Institute for Brain Science/Journal of Biomedical Optics)

University of Washington (UW) researchers have developed a noninvasive light-based imaging technology that can literally see inside the living brain at more than two times the depth, providing a new tool to study how diseases like dementia, Alzheimer’s, and brain tumors change brain tissue over time.

The work was reported Oct. 8 by Woo June Choi and Ruikang Wang of the UW Department of Bioengineering in the Journal of Biomedical Optics, published by SPIE, the international society for optics and photonics.

Noninvasive deep imaging

According to the authors, this new optical coherence tomography (OCT) approach to brain study may allow for examining acute and chronic morphological or functional vascular changes in the deep brain.

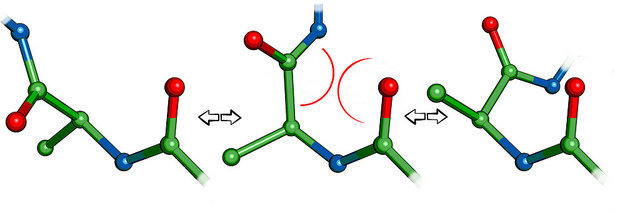

OCT is normally used to obtain sub-surface images of biological tissue at about the same resolution as a low-power microscope and can instantly deliver cross-section images of layers of tissue without invasive surgery or ionizing radiation. OCT images are based on light directly reflected from a sub-surface.

Widely used in clinical ophthalmology, OCT has recently been adapted for brain imaging in small animal models. Its application in neuroscience has been limited, however, because conventional OCT technology hasn’t been able to image more than 1 millimeter below the surface of biological tissue.

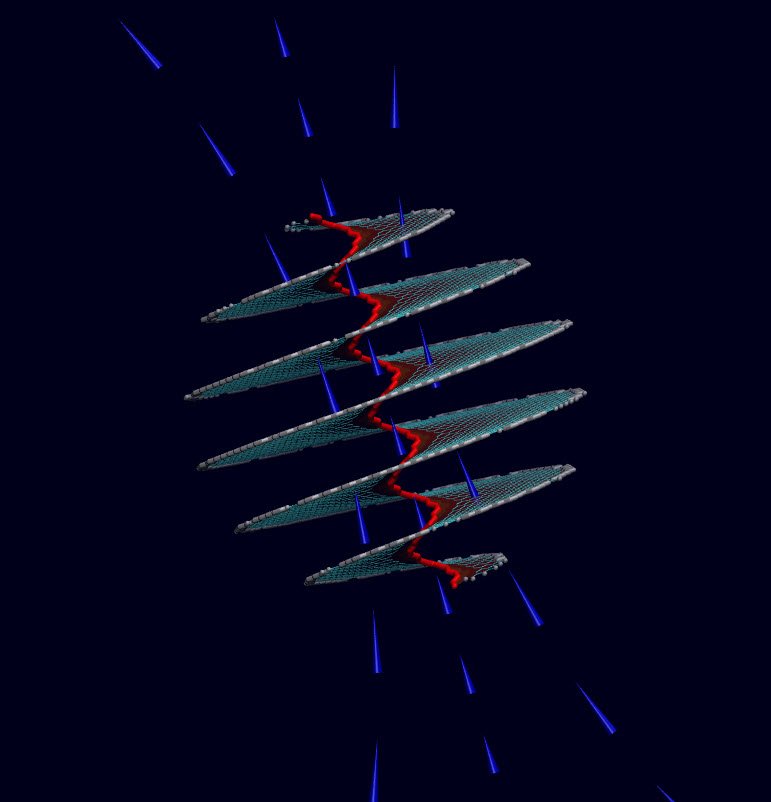

Portion of schematic of the 1.3-μm vertical cavity surface emitting laser (VCSEL) swept-source optical coherence tomography (SS-OCT) system. FC: optical fiber coupler; BD: dual balanced detector; DAQ: data acquisition. (credit: Woo June Choi and Ruikang K. Wang/Journal of Biomedical Optics)

In the paper, Choi and Wang describe how a new technique called “swept-source OCT” (SS-OCT) powered by a vertical-cavity surface-emitting laser (VCSEL) increases signal sensitivity, extending the imaging depth range to more than 2 millimeters. That may make it possible to do things that have been barely attempted in the OCT community, such as noninvasive imaging of the mouse hippocampus or full-length imaging of a human eye from cornea to retina.

It could also allow researchers to monitor deeper morphological changes caused by diseases such as Alzheimer’s disease and dementia, and even to study the effects of aging on the brain.

Abstract of Swept-source optical coherence tomography powered by a 1.3-μm vertical cavity surface emitting laser enables 2.3-mm-deep brain imaging in mice in vivo

We report noninvasive, in vivo optical imaging deep within a mouse brain by swept-source optical coherence tomography (SS-OCT), enabled by a 1.3-μm vertical cavity surface emitting laser (VCSEL). VCSEL SS-OCT offers a constant signal sensitivity of 105 dB throughout an entire depth of 4.25 mm in air, ensuring an extended usable imaging depth range of more than 2 mm in turbid biological tissue. Using this approach, we show deep brain imaging in mice with an open-skull cranial window preparation, revealing intact mouse brain anatomy from the superficial cerebral cortex to the deep hippocampus. VCSEL SS-OCT would be applicable to small animal studies for the investigation of deep tissue compartments in living brains where diseases such as dementia and tumor can take their toll.